From Prompting to Context Engineering: How AI Workflows Are Evolving

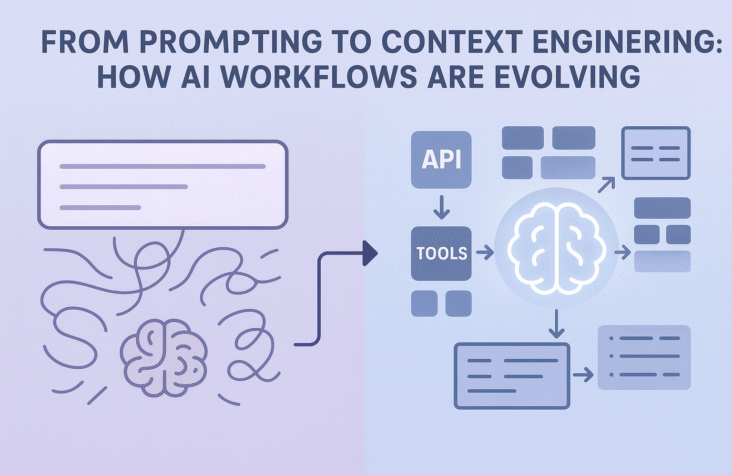

The more I work with LLMs, the more I realise that “good prompts” are not enough. They help for quick experiments, but they fall apart when you try to build something reliable, repeatable and ready for real users.

What actually matters in production is not the clever sentence you type into the model, it is everything around it. That surrounding structure is what people are now calling context engineering.

Recent tech trend reports describe context engineering as the practice of carefully preparing and feeding structured background information to an AI model so it can perform a task reliably, instead of relying on ad hoc prompts. Thoughtworks even compares it to teaching AI to “skip stones” across the right pieces of context rather than dumping the entire lake into the prompt.

What is context engineering, really

At a simple level, context engineering answers one question:

What should the model see, in what shape, and at what moment, so it can do its job well?

That usually involves a few building blocks:

- Retrieval patterns like RAG to pull only the most relevant documents instead of pasting entire wikis.

- Structure such as schemas, metadata, sections and roles, so the model understands which parts of the context matter.

- Orchestration, where agents or tools decide when to search, when to call an API, when to ask follow up questions and when to stop.

Prompting is still there, but it becomes the thin interface on top of a much richer workflow.

Why prompting alone breaks in production

Simple prompting usually fails in these scenarios:

- High stakes

When the answer drives money, compliance or health, you cannot accept random hallucinations. You need grounding in real data, audit trails and clear guardrails. - Complex tasks

Multi step tasks like “analyse these contracts, compare them with our policy, then draft a summary email” need planning, tools and memory, not just a single prompt. - Evolving knowledge

Models are trained on static snapshots. If you want them to stay current on your internal policies, pricing, or product docs, you have to connect external, fresh data through retrieval and other context patterns.

Without that structure, you end up with brittle behaviour: it works in demos, fails quietly in real life.

Also Read:Local AI Revolution: Ollama, Llama 3.1 & Your Laptop

How I think about designing AI workflows now

When I design an AI feature, I no longer start with “what is the perfect prompt”. I start with four questions:

- Task

What is the real unit of work? Summarise, classify, route, reason, generate, or decide. - Context

What does the model actually need to see, and what should we keep away from it to save tokens and reduce confusion? - Guardrails

What is allowed, what is forbidden, and how do we check outputs against those rules? - Feedback

Where will human feedback enter the loop so the system improves instead of drifting?

Only after that do I write the prompts, often as small, focused pieces inside a bigger flow.

Practical steps to move from prompting to context engineering

If you are already playing with LLMs, here is how you can upgrade your approach:

- Map your data to tasks

List your key workflows, then list which internal data sources support each one. That gives you a blueprint for retrieval and routing. - Introduce a thin retrieval layer

Even a basic vector store or search index connected to your app is a big step up from copy paste. Start with one workflow and measure before and after. - Standardise system prompts

Treat your core system messages like code, version them, document them, and keep them close to your business rules. - Think agents, not monoliths

Instead of one giant prompt that does everything, compose smaller agents or steps. Each step has its own role, context and tests. - Measure behaviour, not just vibes

Track accuracy, latency, rejection rates and escalation to humans. Context engineering is only useful if it moves these numbers in the right direction.

Conclusion

Prompting was a great starting point. It made AI feel accessible. But the real shift in the market now is towards context aware AI workflows that are testable, observable and aligned with real business processes.

For me, context engineering is the missing layer between “LLM as a toy” and “LLM as a dependable teammate”. The sooner we design around context instead of only chasing better prompts, the sooner our AI features start to look like real products, not just clever demos.

Frequently Ask Question

What is the difference between prompt engineering and context engineering?

Prompt engineering focuses on how you phrase instructions to the model. Context engineering focuses on everything around that prompt, including which data you retrieve, how you structure it, how you route it between tools and how you control the workflow end to end.

Why is context engineering becoming so important now?

As companies move from small experiments to full products, they discover that reliability, security and compliance matter more than clever wording. Context engineering gives you a way to tame hallucinations, connect live data and design predictable behaviour at scale.

Is RAG the same thing as context engineering?

RAG is one pattern inside context engineering. It covers the idea of retrieving relevant documents and attaching them to the prompt. Context engineering is broader and also includes schema design, metadata, routing, agents, evaluation and governance.

Do I need agents to practice context engineering?

Not always. You can start with simple flows where your application decides when to search, when to call the model and how to validate responses. Agents become useful when the tasks are long running, multi step or need independent decision making.

How can a backend or full stack developer start with context engineering?

Pick one real workflow in your product, add a retrieval layer for its data, create a clear system prompt that explains the task and rules, and wrap the model call in a small service that you can test like any other piece of code.