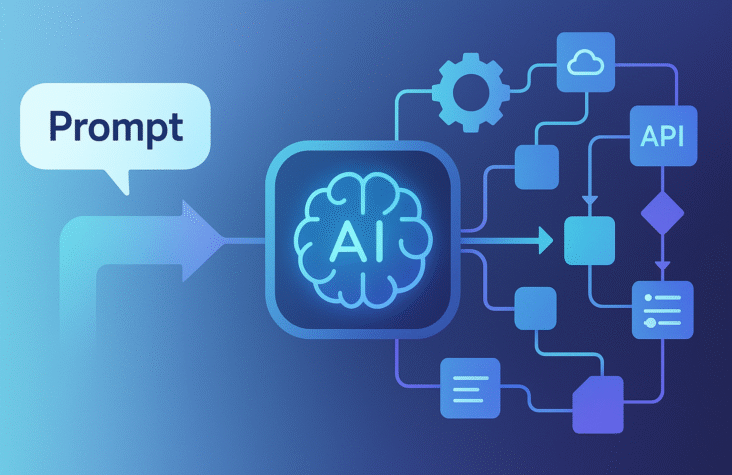

How AI Agents Turn Prompts into Full Workflows

When people talk about “AI agents,” it often sounds like magic. From a developer perspective, it is much more practical. An agent is just an LLM wrapped with tools, memory and a control loop so it can move from a single prompt to an actual workflow.

I like to think of agentic AI as a small distributed system, where each “service” happens to be powered by an LLM.

What agentic AI really is for developers

Agentic AI is a pattern where you give the system a goal, not a one line prompt. The system then:

- Plans the steps

- Chooses which tools or APIs to call

- Reads and writes from memory or a vector store

- Decides when to ask the human for help

Under the hood, most modern stacks follow a similar shape:

- LLM for reasoning

- Tool layer for external actions

- Memory layer for context and history

- Orchestrator to manage steps and agents

You can implement this from scratch with your own state machine, or you can use agent frameworks that already implement these patterns.

Popular options include:

- LangChain and LangGraph for Python or JavaScript

- OpenAI Assistants API for hosted tools and code interpreter style flows

- Microsoft Semantic Kernel for .NET and C Sharp

- CrewAI, AutoGen and similar multi agent libraries in Python

Each one gives you slightly different control over planning, tools and state.

Core building blocks with concrete ideas

When designing an agentic system, I usually think in four layers.

1. Planner

The planner receives an instruction like

“Every day at 9 am, read yesterday’s support tickets, group them by theme, generate a summary for the team and tag tickets that need urgent follow up.”

The planner is an LLM prompt that turns this into a structured plan. For example:

- Fetch tickets from API for yesterday

- Group tickets by topic

- Detect tickets with “urgent” signals

- Generate markdown summary

- Post summary to Slack channel

- Tag urgent tickets in helpdesk

In LangGraph, this lives as a graph of nodes and edges that you define. In OpenAI Assistants, you might rely more on tool calling and your own orchestration code that checks when to call what.

Also Read:Google Antigravity Is Reimagining the Future of AI

2. Tools and actions

Tools are just functions with a schema that the LLM can call.

Examples:

- get_new_tickets(from_date, to_date)

- update_ticket(id, tags)

- send_slack_message(channel, text)

- run_sql(query)

- search_vector_store(query, top_k)

In practice, you:

- Expose these functions to the LLM through the framework

- Let the model choose which one to call based on the plan and context

- Validate the arguments and results on the server side

If you are using:

- LangChain / LangGraph: tools are usually simple Python functions with pydantic schemas.

- OpenAI Assistants: tools are “functions” or “actions” defined in the API.

- Semantic Kernel: tools are called “skills” or “functions” that you register in the kernel.

3. Memory and knowledge

Useful agents need long term awareness:

- Conversation history

- Past actions taken

- Domain knowledge like documentation, policies, configuration

The typical pattern is:

- Use a vector database such as Qdrant, Pinecone, Chroma, Redis, PostgreSQL with pgvector or Azure AI Search.

- Store chunks of documents, tickets, logs or wiki pages as embeddings.

- At runtime, retrieve relevant chunks as the agent works.

This is where RAG and agents come together. The agent does not guess everything. It pulls ground truth from your own data.

For example, a customer support agent can:

- Retrieve relevant past tickets and internal articles

- Read product documentation about a feature

- Use that context to draft a response or decide the right tag

4. Feedback loop and safety

As soon as agents start calling real systems, you need guardrails.

A simple pattern that works well in production:

- Low risk actions (adding tags, drafting text, running read only searches) can be fully automated

- Medium risk actions (sending emails, posting to a channel) go through human approval

- High risk actions (changing customer data, updating permissions, executing arbitrary code) are restricted or completely disallowed

You can implement this with queues and status fields. For example:

- Agent writes “suggested actions” to a database table

- Human reviewer approves or rejects

- A worker service executes only approved actions

This keeps the LLM in the role of a smart planner, while your regular backend keeps authority.

Also Read:The Local AI Revolution

Practical workflows developers can automate with agents

Here are some examples that are realistic for a solo dev or a small team.

Support ticket triage

Stack idea:

- LangGraph or OpenAI Assistants for the agent

- Helpdesk API (Zendesk, Freshdesk, Intercom)

- Vector store with your internal docs

Flow:

- Fetch new tickets every few minutes

- For each ticket, the agent:

- Searches internal docs and past tickets

- Suggests tags, priority, and team

- Searches internal docs and past tickets

- System updates tickets automatically for low risk cases

- Ambiguous or sensitive tickets are sent to a human with notes

Developer productivity bot

Stack idea:

- Semantic Kernel or LangChain

- GitHub API, CI logs, issue tracker

- Vector store with documentation and architecture notes

Flow:

- Agent reads new pull requests, CI logs and open issues

- It generates a daily digest, highlights risky areas and suggests tests

- It can answer “what changed in the last 24 hours in the payments service” from the docs and git history

Internal data analysis assistant

Stack idea:

- Python with LangChain

- Warehouse (Snowflake, BigQuery, Postgres)

- SQL tool and charting tool

Flow:

- Agent receives a plain language question such as “What was the churn for monthly subscribers last quarter by country”

- It plans a sequence of SQL queries

- Executes them through a safe SQL tool with restrictions

- Generates a text and chart answer for the business team

How to get started quickly as a dev

If you are a developer and want to experiment without building a platform from scratch, a simple sequence looks like this:

- Pick a narrow, boring workflow

Something you or your team do daily and hate. For example, “summarize yesterday’s support tickets and highlight three key issues”. - Start with a single agent and one or two tools

Use LangChain or Semantic Kernel to connect:

- An LLM

- Your ticket API

- A vector store with documentation

- Hard code the plan first

Before asking the LLM to “be a planner”, implement a fixed step pipeline. Once that works, gradually let the model decide small branches. - Add logging from day one

Log prompts, tool calls and outputs. This will save you when you need to debug weird decisions and improve prompts. - Introduce human approval slowly

First, let the agent only suggest actions. When the suggestions look good for some time, auto apply a subset of them.

Also Read:From Prompting to Context Engineering: How AI Workflows Are Evolving

Tooling to explore

Here is a quick reference list of tools you can check, depending on your stack:

- Python and JavaScript

- LangChain

- LangGraph for graph based orchestration

- CrewAI and AutoGen for multi agent experiments

- Hosted APIs

- OpenAI Assistants API

- Anthropic tool use and workflows (when available in your region)

- Model specific agent features from cloud providers

- .NET and enterprise

- Microsoft Semantic Kernel

- Azure AI Search with function calling and RAG

- Application Insights or similar for telemetry

- Vector and memory layer

- Qdrant, Pinecone, Weaviate, Chroma

- Postgres plus pgvector if you want something simple and controllable

- Elastic or Azure AI Search if you already live in that ecosystem

The specific choice matters less than the architecture. Start with the smallest possible agent that solves a real internal problem, then gradually add more agents as your confidence grows.

Agentic AI is not about replacing engineers or operators. It is about giving your systems a new “runtime” where some of the control logic is written in natural language and executed by an LLM, while you still own the inputs, tools and final decisions.

Website summary

Agentic AI turns LLMs into practical automation systems for developers by combining planning, tool use, memory and human oversight. With frameworks like LangChain, LangGraph, Semantic Kernel, CrewAI and OpenAI Assistants, it becomes possible to automate real workflows such as support triage, internal analytics and developer productivity while keeping control in your own backend and infrastructure.

Frequently Ask Questions

How is agentic AI implemented in real applications

Agentic AI is usually implemented as a combination of an LLM, a tool layer for APIs and databases, a memory layer such as a vector database and an orchestrator like LangGraph, OpenAI Assistants or Semantic Kernel that manages the sequence of steps and calls.

Which frameworks can I use to build AI agents as a developer

Popular options include LangChain and LangGraph in Python and JavaScript, CrewAI and AutoGen for multi agent setups, OpenAI Assistants for hosted orchestration and Microsoft Semantic Kernel for .NET and enterprise environments.

Do I always need a vector database for agentic AI

Not always, but it helps a lot when workflows depend on documentation, past tickets, conversations or logs. In those cases a vector store such as Qdrant, Pinecone, Postgres with pgvector or Azure AI Search lets the agent retrieve relevant context instead of hallucinating.

How can I keep my agentic AI solution safe in production

You can restrict which tools agents can call, validate tool arguments on the backend, log all prompts and decisions, introduce human approval for risky actions and use normal security practices such as auditing, rate limiting and permissions on any system the agent can access.

What is a good first project to try agentic AI on

A good starter is a narrow internal workflow like daily ticket summaries, auto tagging support requests or generating a daily engineering digest from pull requests and logs. These use mostly read only access and clear rules, which makes it easier to test, monitor and improve the agent over time.

Can small teams use agentic AI without huge infrastructure

Yes. Many teams start with a single LLM API, a lightweight framework such as LangChain or Semantic Kernel and a simple vector store. You can run the orchestration in a normal web backend, serverless function or worker without needing a massive new platform.

How do agents relate to RAG and traditional chatbots

RAG focuses on fetching the right information from your data. Chatbots focus on conversational interfaces. Agents combine both and add the ability to call tools and execute multi step plans, which makes them better suited for automating workflows instead of just answering questions.