Beyond the Hype Cycle: 5 Developer AI Trends Defining 2026 (Based on LinkedIn Data & Real-World Signals)

Let’s be real for a second. If you’re a developer in late 2025, your LinkedIn feed is probably 50% hustle culture bro-poetry and 50% overwhelming updates about new AI models. It’s exhausting. We went from “AI will replace us all” panic in 2023 to “AI is just a fancy autocomplete” cynicism in 2024, and finally landed in the “Okay, how do I actually use this to ship faster and not get fired?” pragmatism of 2025.

But 2026 is knocking, and the landscape is shifting again. It’s no longer just about using AI tools; it’s about fundamentally restructuring how we build software and the very definition of what a “developer” is.

I’ve been analyzing the signal amidst the noise tracking hiring trends on LinkedIn, seeing what skills are showing up in job descriptions for “Staff Engineer” roles, and observing what’s actually happening in engineering discords and private Slack channels.

The vibe shift is palpable. We are moving from passive AI assistance to active AI agency. Here are the five trends that will define developer life in 2026, backed by data and real-world realities.

1. The Rise of “Agentic Workflow Architects” ( Goodbye, Prompt Engineering)

Remember when “Prompt Engineer” was predicted to be the six-figure job of the future? Yeah, that didn’t age well. It turns out, writing a good prompt is just a basic literacy skill now, like knowing how to Google effectively.

The real juice in 2026 is Agentic AI.

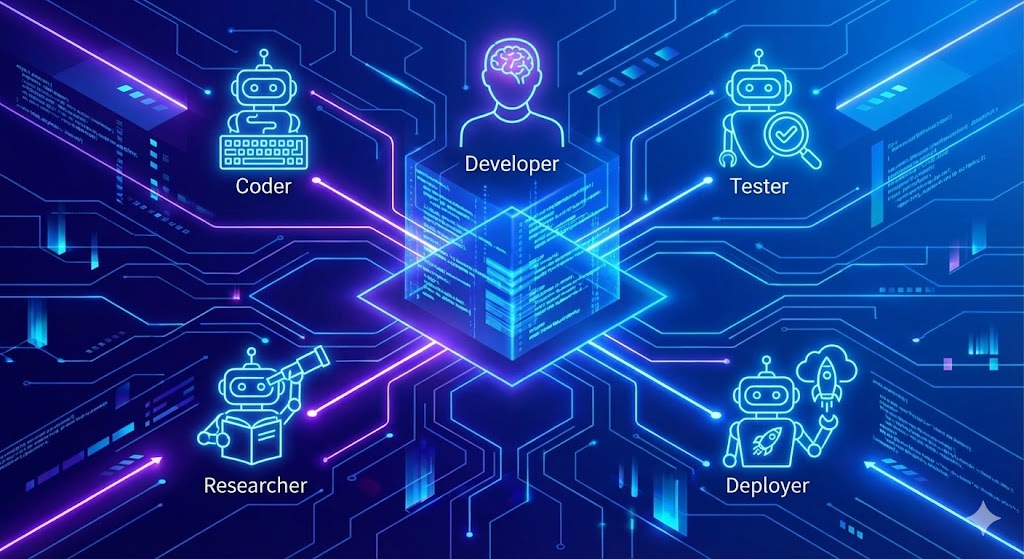

We aren’t just asking a chatbot to write a function anymore. We are building systems where multiple AI agents interact to solve a complex problem. One agent might handle research, another writes the initial code, a third writes the tests, and a fourth acts as a critic to review the whole package.

The LinkedIn Signal:

The buzzwords on profiles are shifting from “experienced with ChatGPT” to “orchestrating multi-agent systems,” “LangGraph proficiency,” and “building autonomous dev workflows.” Companies aren’t looking for people who can chat with AI; they want engineers who can build the structures within which AI can operate semi-autonomously.

The Real-World Vibe Check:

Instead of spending hours debugging a cryptic error, imagine spinning up an agent, giving it read access to your repo and the logs, and telling it: “Find out why the payment service fails only on Tuesdays under high load, and propose a fix.”

In 2026, the best developers will be the ones who are best at managing these digital workforces. You become less of a bricklayer and more of a construction site foreman.

2. Small Language Models (SLMs) and the “Localhost Renaissance”

For the last few years, the mantra has been “bigger is better.” Trillion-parameter models running on massive GPU clusters in the cloud were the gold standard. But the pendulum is swinging hard in the other direction.

Enter Small Language Models (SLMs). These are models optimized for specific tasks—like coding in Python, analyzing security vulnerabilities, or summarizing legal documents—that are small enough to run locally on a reasonably powered laptop.

Also Read:Strings are Dead: Why PydanticAI is the Only Way I Write Agents Now

Why the shift? Two reasons: Latency and Privacy.

Enterprises are realizing they can’t send every single line of proprietary code to an external API owned by a tech giant. It’s a compliance nightmare. Furthermore, waiting 500ms for a code completion suggestion breaks the “flow state.”

The LinkedIn Signal:

We are seeing a massive spike in demand for skills related to model quantization, edge deployment, and tools like Ollama, Llama.cpp, and MLX for Apple Silicon. “On-device AI” is becoming a requisite skill for mobile and IoT developers.

The Real-World Vibe Check:

In 2026, your dev environment will likely include a specialized 7B or 13B parameter model running right there on your machine, fine-tuned specifically on your company’s codebase and coding style. It works offline, it doesn’t leak data, and it’s blazing fast. The cloud will be reserved for the heavy lifting, but the day-to-day work is coming back to localhost.

Also Read:Vibe Checks Aren’t Enough: How to Actually “Unit Test” Your AI Agents

3. Governance-as-Code: The New “Shift Left”

“Shift Left” used to mean moving testing and security earlier in the development lifecycle. In 2026, it means shifting AI governance and ethics left.

With the EU AI Act fully in motion and similar regulations popping up globally, you cannot just slap an LLM into your product and hope it doesn’t hallucinate something racist or leak PII (Personally Identifiable Information). If you build it, you are responsible for its output.

This means AI guardrails are no longer a “nice to have” feature managed by a separate trust and safety team. They are foundational requirements implemented by software engineers.

Also Read:

The LinkedIn Signal:

Job titles like “AI Governance Engineer” or “AI Compliance Architect” are exploding. But more importantly, standard Senior Software Engineer roles are now asking for experience with “LLM evaluation frameworks,” “implementing guardrails,” and “AI transparency standards.”

The Real-World Vibe Check:

You’ll be writing unit tests for your AI’s personality and safety guidelines. Before a feature merges, your CI/CD pipeline won’t just run SonarQube; it will run an evaluation suite checking your model for bias drift and ensuring it refuses to answer harmful prompts. If the AI’s “safety score” drops below a certain threshold, the build fails. Governance is becoming just another part of the TDD cycle.

4. The Full-Stack AI Lifecycle (Beyond Just Coding)

Up until now, most “AI for devs” tools have focused solely on the “writing code” phase. GitHub Copilot is the prime example. But writing code is maybe 30% of what we actually do.

The remaining 70% requirements gathering, architectural design, Jira ticket management, documentation, testing, deployment, and on-call incident response is ripe for disruption.

In 2026, we will see end-to-end AI integration across the entire Software Development Life Cycle (SDLC).

The LinkedIn Signal:

Companies are looking for devs who know how to integrate AI into their DevOps pipelines. Skills involving “AI-driven testing,” “automated documentation generation from code,” and “intelligent incident response” are trending up.

The Real-World Vibe Check:

Imagine this workflow:

- An AI PM agent listens to a stakeholder meeting and drafts Jira tickets.

- You pick up a ticket. Your IDE’s AI agent analyzes the requirements, suggests an architecture, and outlines the necessary changes across different microservices.

- You write the core logic (assisted by AI).

- Another AI agent automatically generates the unit and integration tests based on your code and the original ticket requirements.

- Upon deployment, an AI SRE agent monitors logs for anomalies, predicting incidents before they cascade.

It’s the glow-up the entire SDLC desperately needed.

5. The Death of the “Junior Dev” (and the Birth of the “Apprentice Architect”)

This is the hardest pill to swallow, and the one causing the most anxiety on platforms like Reddit and blind.

The traditional entry-level role where you spend six months writing boilerplate CRUD endpoints and fixing CSS centering issues is vanishing. AI can do that faster, cheaper, and honestly, sometimes better than a fresh bootcamp grad.

But this isn’t the end of entry-level jobs; it’s a forced evolution.

The LinkedIn Signal:

Entry-level postings that require just “HTML/CSS/JS and React” are drying up. They are being replaced by roles asking for strong fundamental problem-solving skills, system design understanding, and the ability to “review and validate AI-generated code.”

The Real-World Vibe Check:

In 2026, a junior developer isn’t a code monkey; they are an Apprentice Architect. Because the AI handles the syntax and the boilerplate, juniors are forced to operate at a higher level of abstraction much earlier in their careers. They need to understand why the code works, how components interact, and how to spot subtle logic errors that the AI missed.

The barrier to entry for coding has never been lower, but the barrier to entry for engineering is getting higher. You need to level up your foundational knowledge to survive.

The Takeaway for 2026

Look, the anxiety is real. I feel it too. The pace of change is unrelenting. But if you look at the data coming out of LinkedIn and the actual workflows adopted by leading tech companies, the message is clear: AI isn’t replacing developers; it’s raising the baseline.

The developers who will thrive in 2026 aren’t the ones resisting AI, nor are they the ones blindly trusting it. They are the pragmatists who learn to architect agentic workflows, who understand the importance of running local models for privacy, and who treat governance as a core engineering discipline.

It’s time to stop worrying about your job being taken by a chatbot and start focusing on becoming the engineer who manages the fleet of chatbots. The tools are changing, but the mission solving human problems with technology remains the same. Stay curious.

Frequently Asked Questions on 2026 Developer Trends

Is “Prompt Engineering” officially dead as a career path?

As a standalone, six-figure job title? Yeah, pretty much. It’s like “Googling.” It’s a fundamental literacy skill that every developer needs to have, not a specialized role. The market has shifted toward AI orchestration building the systems where prompts are used dynamically by agents rather than just crafting the perfect sentence.

I’m a bootcamp grad or a junior developer. Am I completely cooked?

No, but you need to pivot your strategy immediately. The era of getting hired just knowing syntax and framework basics is ending. You need to focus on foundational engineering concepts: system design, data structures, and critically reviewing code.

Think of yourself as an “Apprentice Architect.” The AI will write the boilerplate; your job is to understand how the pieces fit together and catch the subtle, dangerous hallucinations the AI misses.

How do I actually get started with “Agentic AI”?

Move beyond the ChatGPT web interface. Start getting your hands dirty with orchestration frameworks. Look into LangChain (specifically their LangGraph library for stateful agents) or Microsoft’s AutoGen.

Try building a simple project where two agents have to collaborate—for example, one agent searches the web for information, and another agent formats that information into a markdown report.

Why would I bother running a “Small Language Model” locally when GPT-5 or Claude are so much more powerful in the cloud?

Two massive reasons: Privacy and Latency. If you are working on highly sensitive proprietary IP in fintech or healthcare, your legal team is not going to let you paste that code into a cloud-based LLM.

A local model ensures data never leaves your machine. Secondly, speed is everything in development. Waiting 2-3 seconds for a cloud API to return a code suggestion breaks your flow state. A 7B parameter model running locally on a MacBook Pro is near-instant.

“Governance-as-Code” sounds like boring corporate compliance stuff. Do engineers really need to care about this?

You absolutely do, unless you enjoy having your product shut down by regulators or getting sued because your AI did something illegal. In 2026, AI governance isn’t paperwork; it’s an engineering constraint.

Just like you write tests for security vulnerabilities or performance bottlenecks, you need to write automated tests (evals) to ensure your AI behaves within acceptable boundaries. It’s a core part of shipping responsible software.