Nature Just Got Nerfed: Inside MIT’s AI-Powered ‘Bumblebee’ Robot

Let’s be real for a second. Traditional drones, the quadcopters we all know and love are kind of clumsy. They’re loud, they’re rigid, and if they clip a wall, it’s game over. They are built on the principles of airplanes and helicopters: rigid bodies, spinning rotors, and aerodynamics that rely on steady airflow.

But nature doesn’t work like that. Nature is messy. It’s windy, chaotic, and full of obstacles. Yet, a bumblebee can navigate a windstorm, dodge a swatting hand, and land on a moving flower petal without breaking a sweat.

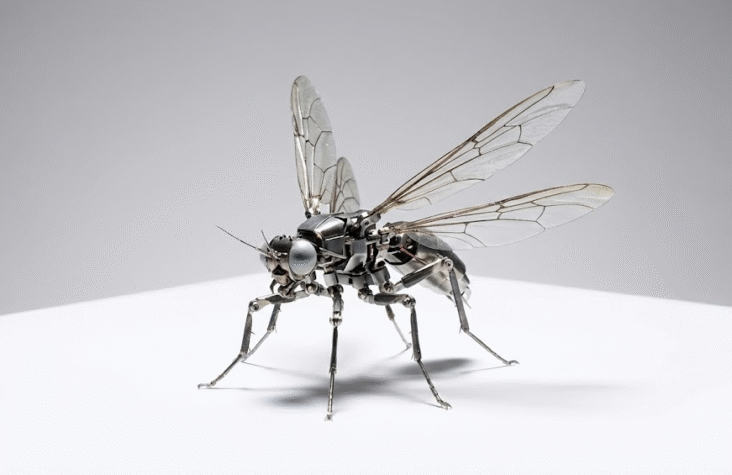

That specific level of agility has been the “white whale” for roboticists for decades. And now, researchers at MIT’s Department of Electrical Engineering and Computer Science (EECS), led by Associate Professor Kevin Chen, have officially cracked the code. They’ve built a soft-actuated, insect-scale robot that flies like a bee, survives collisions, and runs on a brain powered by Deep Learning.

This isn’t just a cool gadget; it’s a paradigm shift in how we think about aerial robotics, control theory, and the intersection of hardware and AI.

Here is the full breakdown of how they did it, why the software stack matters, and what this means for the future of tech.

1. The Hardware Stack: Why “Soft” is Hard

To understand why this robot is such a big deal, you have to understand the nightmare of scaling physics.

When you shrink a robot down to the size of a bug (about 4 centimeters) and the weight of a paperclip (under 1 gram), standard engineering rules stop applying. You can’t just shrink a DJI Phantom motor. Electric motors lose efficiency drastically as they get smaller due to friction and heat dissipation issues. Gears grind; bearings seize. It’s a mess.

Enter Dielectric Elastomer Actuators (DEAs)

MIT ditched rigid motors entirely. Instead, they’re using Dielectric Elastomer Actuators (DEAs). Think of these as artificial muscles.

Here’s the tech breakdown:

- The Material: These actuators are made of soft materials (elastomers) sandwiched between microscopic electrodes.

- The Mechanism: When you apply a voltage, the electrodes squeeze the elastomer, causing it to expand. Turn the voltage off, it contracts.

- The Frequency: By oscillating this voltage, the robot can flap its wings at 330 to 400 Hz (that’s 400 times per second).

This mimics the biological mechanics of insect flight almost perfectly. Because the actuators are soft, they are resilient. If a rigid drone hits a wall, the propeller snaps. If this soft robot hits a wall, it just bounces. It creates a robot that is inherently robust at the hardware level.

Also Read : MCP & Next-Gen APIs: Leveling Up Your MEAN/Node.js Backend to API-First or Micro-API Architecture

2. The Physics: Battling the Reynolds Number

As a software guy, I usually focus on the code, but you can’t code flight without understanding the environment. At the scale of a fruit fly or this robot, the air doesn’t feel like air. It feels like syrup.

This is governed by the Reynolds Number ($Re$), which is a dimensionless quantity in fluid mechanics used to predict flow patterns. The equation looks like this:

$$Re = \frac{\rho u L}{\mu}$$

Where:

- $\rho$ is the density of the fluid (air).

- $u$ is the flow speed (velocity of the wing).

- $L$ is a characteristic linear dimension (wing length).

- $\mu$ is the dynamic viscosity of the fluid.

For a Boeing 747, the $Re$ is massive (turbulent flow dominates, inertia is high). For this tiny robot, the $Re$ is very low. Viscosity dominates. This means the robot has to constantly fight the air just to move. It’s like trying to swim in honey.

To fly in “honey,” you can’t glide. You have to flap aggressively and create complex vortices to generate lift. This requires a control system that can react faster than a human pilot ever could.

Also Read : The Latency War: Why Edge Functions Are Quietly Becoming Mandatory for Real-Time AI Apps

3. The Software: From PID to Deep Learning

This is where the magic happens. This is where the AI comes in.

In previous iterations, researchers used standard linear controllers (like PID controllers Proportional-Integral-Derivative). These work great for your thermostat or a stable cruise control, but they are terrible at handling chaos. They require “hand-tuning” of parameters. If the wind changes, or a wing gets slightly damaged, the math falls apart, and the robot crashes.

The MIT team realized that hard-coding the physics wasn’t working. The aerodynamics at this scale are too complex to model perfectly with standard equations.

The Solution: Sim-to-Real Reinforcement Learning

Instead of programming the robot how to fly, they let it learn how to fly. But here’s the catch: you can’t train a neural network on a physical robot that breaks every time it crashes. You’d run out of robots in an hour.

So, they used a Sim-to-Real pipeline.

- The Simulation: They built a high-fidelity physics simulator to model the robot and the aerodynamics.

- The Policy Training: They used Deep Reinforcement Learning (RL). In the sim, the virtual robot tried millions of flights. It crashed, learned, adjusted, and tried again. It learned to map “state” (orientation, velocity) to “action” (voltage to wings).

- Domain Randomization: To make sure the AI didn’t just memorize the simulation, they randomized the physics in the sim changing the virtual wind, the wing weight, the air density. This forced the AI to learn a “general” policy that could handle uncertainty.

- Deployment: They took this trained neural network and ported it onto the microcontroller of the real robot.

The Result?

The robot flies with an “intuitive” understanding of aerodynamics. It’s not following a script; it’s reacting.

When they turned on the fans to simulate wind gusts, the AI controller adjusted the wing beats instantly to maintain stability. The old PID controller would have flipped over. The AI controller performed somersaults.

Yes, somersaults. The team demonstrated the bot doing 10 backflips in 11 seconds. This isn’t just a party trick; it demonstrates torque authority. It means the AI can generate massive force in a split second to correct its orientation. That is the definition of agility.

Also Read : Beyond the Hype Cycle: 5 Developer AI Trends Defining 2026 (Based on LinkedIn Data & Real-World Signals)

4. Real-World Applications: Why We Need Bug-Bots

Okay, cool tech. But why do we care? What is the shipping product here?

We aren’t going to be using these to deliver Amazon packages (they can’t lift a box). But the applications for Micro-Aerial Vehicles (MAVs) are massive in sectors where humans and traditional robots fail.

A. Search and Rescue (The “Rubble” Problem)

After an earthquake or a building collapse, the biggest challenge is locating survivors. Dogs can only smell so far; humans can’t fit in the cracks; heavy drones disturb the debris.

These “bumblebee” bots can fly through gaps the size of a coin. A swarm of them could be released into a disaster zone, mapping the interior and using thermal sensors to find body heat, then relaying that location to the rescue team.

B. Industrial Inspection

Think about a jet turbine or a massive fusion reactor. Inspecting the insides usually means taking the whole machine apart, which costs millions in downtime.

A soft, tiny robot can fly inside the machinery while it’s offline (or even online, if agile enough) to inspect for micro-cracks or wear and tear.

C. Artificial Pollination

This is the Black Mirror episode, but in a good way. With global bee populations declining, vertical farms and greenhouses are looking for alternatives.

This robot is literally designed to mimic a bumblebee. It could be programmed to carry pollen from flower to flower, ensuring food security in controlled environments.

Also Read : Strings are Dead: Why PydanticAI is the Only Way I Write Agents Now

5. The Bottlenecks: What’s Stopping Us?

I’m an optimist, but I’m also a dev. I know that demos aren’t production. Kevin Chen estimates we are 5–10 years away from real-world deployment. Here is what is still broken in the stack:

Power Density

Currently, the robot is often tethered or runs for a very short time. Batteries are heavy. The more battery you add, the heavier the bot, the more lift you need, the more power you drain. It’s a vicious cycle.

The Fix: Solid-state batteries or wireless power transmission (laser power beaming) might be the solution here.

On-Board Compute

The AI model is efficient, but running a neural net on a sub-gram chip is hard. Currently, a lot of the heavy sensing (like motion capture cameras in the lab) is done off-board.

The Fix: We need better Edge AI chips. We need the robot to do SLAM (Simultaneous Localization and Mapping) entirely on its own processor without communicating with a server.

Swarm Coordination

One bee is useless. You need a hive. Programming a distributed system where 1,000 robots talk to each other without crashing the network (latency, bandwidth) is a massive software architecture challenge.

Also Read : Vibe Checks Aren’t Enough: How to Actually “Unit Test” Your AI Agents

6. The Bigger Picture: The Era of Bio-Hybrid Tech

This robot represents a shift in philosophy. For a long time, we tried to conquer nature with brute force (steel, jet fuel, rigid angles). Now, we are learning to join it.

We are seeing Bio-Hybrid Robotics:

- Robots that use mushroom mycelium as controllers.

- Robots that use actual insect antennas as sensors.

- And now, robots that use Deep Learning to mimic the neural patterns of insect flight.

As developers, we are moving away from writing “if/else” logic to training models that develop “instincts.” The code is becoming less deterministic and more probabilistic. It’s scary, but it’s also undeniably the future.

The MIT bumblebee isn’t just a robot; it’s a proof of concept that soft is strong and AI is agile.

Now, if we can just figure out how to keep them from flying into my soda can, we’re golden.

Also Read : Stop Trusting Vector DBs Blindly: Why Knowledge Graphs (GraphRAG) Are the Missing Piece for Smart AI Agents

Frequently Asked Questions (FAQs)

Q: Is this robot actually biological?

A: No. It is bio-inspired. It uses synthetic materials (elastomers, carbon nanotubes) to mimic biological functions, but there are no organic cells involved. It’s 100% machine, just very squishy.

Q: Can it spy on me?

A: I mean, technically? But realistically, no. The payload capacity is extremely low. It can barely carry its own power source right now, let alone a high-def camera and a microphone and a transmitter. We are a long way off from Black Mirror surveillance bees.

Q: How does it handle wind if it’s so light?

A: That’s the “Deep Learning” part. Because the AI controller has trained on millions of simulated wind gusts, it reacts faster than a human pilot. It adjusts the wing beat frequency and angle instantly to “fight” the wind, similar to how a real fly stabilizes itself.

Q: Why do we need “soft” robots? Why not just make small metal ones?

A: Durability. At that scale, things break easily. If you drop a metal watch mechanism, it breaks. If you drop a rubber band ball, it bounces. Soft robots can survive collisions, being stepped on, or crashing into walls, which is essential for search and rescue.

Q: What is the “Sim-to-Real” gap mentioned in the article?

A: In AI, a model trained in a video game (simulation) often fails in the real world because the real world has friction, dust, and imperfect physics that the game didn’t capture. The “gap” is the difference in performance. MIT closed this gap using advanced domain randomization techniques.

Q: When can I buy one?

A: Not anytime soon. This is currently bleeding-edge research. The researchers estimate 5 to 10 years before we see practical deployment in industries like agriculture or inspection.