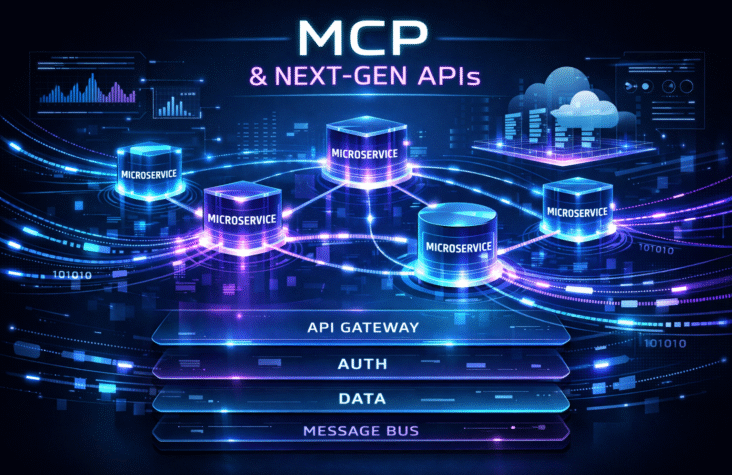

MCP & Next-Gen APIs: Leveling Up Your MEAN/Node.js Backend to API-First or Micro-API Architecture

Alright, let’s talk real talk. You’ve got a MEAN or Node.js backend humming along, doing its thing. It’s been a workhorse, a true MVP in its time. But let’s be honest, in today’s rapid-fire digital landscape, “good enough” is quickly becoming “not quite cutting it.” We’re talking about a world where user expectations are sky-high, scalability is non-negotiable, and the ability to pivot on a dime is the ultimate superpower. This is where API-first and micro-API architectures step in, ready to revolutionize how you build and deploy your applications.

This isn’t just some tech fad we’re gassing up; it’s a fundamental shift towards building more resilient, scalable, and adaptable systems. If you’re still on the fence about transitioning your existing MEAN/Node.js monolith, prepare to have your mind changed. We’re about to break down why this move is not just smart, but essential, and how to actually pull it off without spiraling into a refactoring nightmare.

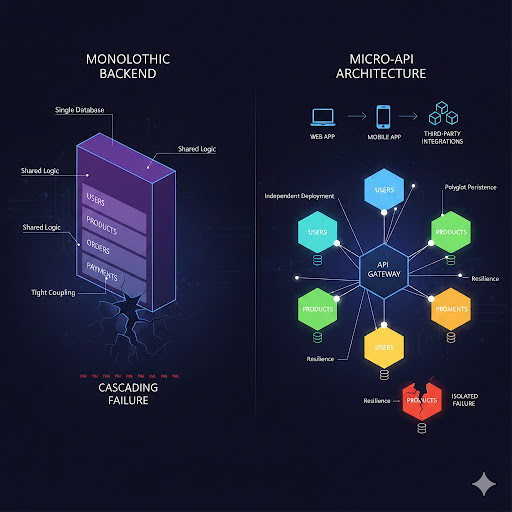

The Monolith’s Golden Cage: Why Your Current Setup Might Be Holding You Back

Before we dive into the shiny new world, let’s acknowledge the elephant in the room: the monolithic backend. When you started, it probably made perfect sense. Everything in one place, easy to manage (at first), and a clear path from dev to deployment. Node.js, with its non-blocking I/O and JavaScript everywhere, made building these single-service applications pretty efficient.

But as your application grew, so did the pain points:

- Scalability Nightmares: Trying to scale a monolithic application is like trying to scale a single, giant building. You can add more floors, but the foundational structure still limits you. If one small part of your app experiences high traffic, you have to scale the entire thing, which is a massive waste of resources.

- Deployment Headaches: A tiny change in one module can necessitate redeploying the entire application. This means longer deployment cycles, increased risk of regressions, and the terrifying “big bang” release.

- Developer Velocity Drag: As the codebase swells, understanding and contributing to it becomes a monumental task for new developers. Inter-team dependencies become a bottleneck, slowing down feature development and innovation.

- Technology Lock-in: Stuck with the initial tech stack for your entire application. Want to try a new language or database for a specific service where it would excel? Tough luck, you’re locked in.

- Resilience Risks: A single point of failure. If one component goes down, the entire application can crash, leading to a complete outage.

Sounds familiar? Yeah, you’re not alone. Many organizations are facing these very challenges, realizing that while their monolith was a great starting point, it’s now become a bottleneck for future growth and innovation.

Also Read:Why Edge Functions Are Quietly Becoming Mandatory for Real-Time AI Apps

Enter API-First & Micro-APIs: The Game Changers

So, what’s the antidote to these monolithic woes? API-first and micro-API architectures. While often used interchangeably, let’s draw a subtle distinction:

- API-First: This is a philosophy, a mindset. It means designing and building your APIs before you even start developing the consumer applications (web, mobile, third-party integrations). The API is treated as a first-class product, defining the contracts and capabilities of your services upfront.

- Micro-APIs (Microservices): This is an architectural style. It’s about breaking down your application into a collection of small, independently deployable, loosely coupled services, each responsible for a single business capability. Each of these services exposes its functionality through APIs.

When you combine the API-first philosophy with a micro-API architecture, you get a powerful synergy. You’re not just building small services; you’re building well-defined, contract-driven small services.

Let’s visualize this shift:

The Unmatched Benefits of This Modern Approach

Adopting an API-first, micro-API strategy for your Node.js backend isn’t just about buzzwords; it unlocks a whole new level of agility and performance:

- Independent Scalability: Need to scale your Orders service during a flash sale? No problem. Scale just the Orders service. Your Users or Products services can chill. This optimizes resource usage and costs.

- Faster Development & Deployment Cycles: Small teams can own specific services, developing and deploying them independently. This means quicker iterations, fewer conflicts, and features hitting production faster. If a bug is found in one service, you only redeploy that service, minimizing risk.

- Enhanced Resilience & Fault Isolation: If one microservice goes down (e.g., the Payments service encounters an issue), the rest of your application can continue to function, perhaps with graceful degradation. The infamous cascading failure is largely mitigated.

- Technology Freedom (Polyglot Persistence & Programming): Each microservice can choose the best tool for the job. Your User service might use PostgreSQL, while your Analytics service leverages a NoSQL database like MongoDB or Cassandra. Want to write a specific high-performance service in Go? Go for it! This flexibility empowers teams to use technologies best suited for their specific domain.

- Improved Maintainability & Code Organization: Smaller codebases are easier to understand, maintain, and refactor. Onboarding new developers becomes a smoother process as they only need to grasp the domain of their specific service.

- Better API Management & Ecosystems: Treating APIs as products means better documentation, versioning, and discoverability. This fosters a robust ecosystem, enabling easier integration with third-party services and allowing you to expose your functionality to partners and developers more effectively.

Discover how the latest developer AI trends, based on LinkedIn data and real-world signals, are shaping the future of technology in 2026.

The Roadmap: Transitioning Your MEAN/Node.js Monolith

Alright, you’re convinced. This sounds like the move. But how do you actually get there from your existing MEAN/Node.js monolith without burning everything down? It’s not a rip-and-replace scenario; it’s a strategic, iterative process.

Phase 1: Planning and Discovery – The Brain Dump

- Identify Bounded Contexts & Business Capabilities: This is arguably the most crucial step. Look at your existing monolith and identify distinct functional areas or business domains. For an e-commerce app, this might be Users, Products, Orders, Payments, Inventory, Shipping, etc. Each of these could become a microservice.

- Map Dependencies: Understand how these potential services currently interact within your monolith. What data do they share? Which functions call which? This helps you determine the order of extraction and minimize ripple effects.

- Define API Contracts (API-First Mindset): For each identified service, start defining its external API. What endpoints will it expose? What data structures will it accept and return? Use tools like OpenAPI (Swagger) to document these contracts rigorously. This becomes the blueprint.

- Choose Your Tools: Consider your microservice orchestration (Kubernetes, Docker Swarm), API Gateway (Kong, Nginx, AWS API Gateway), service mesh (Istio, Linkerd), messaging queues (Kafka, RabbitMQ), and monitoring solutions. Node.js is still a fantastic choice for building individual microservices due to its event-driven nature and rich ecosystem.

Phase 2: The Strangler Fig Pattern – Gentle Extraction

This is the most common and least risky approach for migrating a monolith. It involves slowly “strangling” the monolith by redirecting traffic to new microservices as they are built.

- Identify a “Leaf” Service: Start with a service that has minimal dependencies on other parts of the monolith, or one that’s causing significant pain points (e.g., a highly scaled service). A good candidate might be a reporting service, a user profile service, or a notification service.

- Build the New Microservice:

- New Database (if applicable): Design a dedicated database for this new service. Migrate relevant data from the monolithic database. This embraces the “data ownership per service” principle.

- New Node.js Application: Develop the microservice as a standalone Node.js application, adhering to the API contract defined earlier.

- Containerize It: Package your new microservice in a Docker container for consistent deployment.

- Implement an API Gateway: This is your traffic cop. Initially, it will route most requests to your monolith. As you extract services, you’ll configure the gateway to route specific requests to the new microservices.

- Example: All /users/* requests go to the new User Microservice, while all other requests still hit the monolith.

- Here’s a visual of the Strangler Fig in action:

- Test Extensively: This is where you can’t cut corners. Ensure the new service functions correctly, integrates seamlessly, and that the API Gateway is routing traffic as expected.

- Iterate and Repeat: Continue identifying and extracting services one by one, gradually shrinking your monolith until it’s either completely gone or reduced to a very small, manageable core.

Also Read:Why PydanticAI is the Only Way I Write Agents Now

Phase 3: Operations & Management – Keeping the Engines Running

Once you start breaking things up, managing them becomes a different beast.

- Observability is Key: Implement robust logging, monitoring, and tracing across all your services. Tools like Prometheus, Grafana, ELK Stack (Elasticsearch, Logstash, Kibana), or New Relic are your best friends. You need a holistic view of your distributed system.

- Automate Everything (CI/CD): With multiple independent services, manual deployments are a no-go. Set up robust Continuous Integration and Continuous Delivery pipelines for each microservice. Think GitHub Actions, GitLab CI/CD, Jenkins.

- Service Discovery & Registration: How do services find each other? Use service discovery mechanisms (e.g., Consul, Eureka, or Kubernetes’ built-in DNS) so services can dynamically locate and communicate without hardcoding addresses.

- Centralized Configuration Management: Managing configuration for dozens of services manually is a nightmare. Use tools like HashiCorp Vault, Kubernetes ConfigMaps, or AWS Systems Manager Parameter Store.

- Distributed Data Management: This is a big one. Transactions across multiple services require careful consideration. Explore patterns like the Saga pattern for managing distributed transactions or event-driven architectures (using message queues) to ensure eventual consistency.

- Security Posture: Each microservice needs its own security considerations. Implement proper authentication (e.g., JWT) and authorization, and ensure secure communication between services (mTLS).

Challenges and Considerations (Keeping It Real)

While the benefits are massive, let’s not pretend it’s a walk in the park. Transitioning to microservices comes with its own set of challenges:

- Increased Operational Complexity: You’re managing many smaller services instead of one large one. This means more deployments, more monitoring, and more components to keep track of.

- Distributed Data Management: As mentioned, maintaining data consistency across independent databases is harder than simple ACID transactions in a monolith.

- Debugging Across Services: Tracing a request through multiple microservices can be complex without proper tracing tools.

- Overhead of Inter-service Communication: Network latency and serialization/deserialization of data can add overhead, which needs to be carefully managed.

- Team Reorganization: Your team structure might need to shift from feature-centric to service-centric, aligning with the “you build it, you run it” philosophy.

Wrapping It Up: The Future is Distributed

Moving your MEAN/Node.js backend to an API-first or micro-API architecture is a journey, not a sprint. It demands careful planning, a disciplined approach, and a commitment to best practices. But the payoff – in terms of scalability, resilience, developer velocity, and technological flexibility – is absolutely worth it.

By embracing this paradigm, you’re not just upgrading your backend; you’re future-proofing your entire application ecosystem. You’re building systems that can evolve with your business, adapt to new demands, and empower your teams to innovate faster than ever before. It’s a next-level move for any tech-driven organization aiming for sustained success in this wild, wonderful world of software.

So, are you ready to ditch the monolithic chains and embrace the distributed future? Let’s get building!

FAQs: Your Burning Questions Answered

Is my MEAN/Node.js monolith too small to benefit from microservices?

Not necessarily. While microservices shine in larger, complex applications, even smaller projects can benefit from an API-first approach, which forces you to think about clear contracts and decoupled components. For truly small applications, a well-architected monolith might still be the most efficient choice initially. The key is knowing when the complexity of the monolith starts to outweigh the overhead of microservices.

What are the main risks of transitioning to microservices?

The primary risks include increased operational complexity, challenges with distributed data management and consistency, debugging distributed systems, and the potential for service communication overhead. Without proper planning and tooling, you can end up with a “distributed monolith” that has all the downsides and none of the benefits.

How much does this transition typically cost (in terms of time and resources)?

This is highly variable. It depends on the size and complexity of your existing monolith, the experience of your team, and the chosen tools. It’s a significant investment in time and effort, often taking months or even years for large systems. However, the long-term benefits in terms of developer productivity, scalability, and system resilience often justify the initial cost.

Should I rebuild from scratch or refactor incrementally?

Almost always, choose incremental refactoring using patterns like the Strangler Fig. A complete rebuild (“big bang” rewrite) is notoriously risky, often leading to project failure or significant delays. Incrementally extracting services allows you to deliver value continuously and learn as you go, minimizing risk.

What role does an API Gateway play in this architecture?

An API Gateway is crucial. It acts as the single entry point for all client requests, routing them to the appropriate microservice. It can also handle cross-cutting concerns like authentication, authorization, rate limiting, caching, and request/response transformation, offloading these responsibilities from individual microservices.

How do I manage data consistency when each microservice has its own database?

This is one of the trickiest parts. You typically achieve “eventual consistency” rather than strong transactional consistency across services. Patterns like the Saga pattern (a sequence of local transactions, where each transaction updates its own database and publishes an event to trigger the next step) or using event sourcing and messaging queues (like Kafka or RabbitMQ) are common approaches.

Can I still use Node.js for my microservices?

Absolutely! Node.js is an excellent choice for building microservices. Its non-blocking, event-driven architecture is well-suited for I/O-bound services, and its vast ecosystem (NPM) allows for rapid development. You can even mix Node.js services with services written in other languages (polyglot programming) within the same microservice architecture.

What about the front-end? How does this impact my Angular/React/Vue app?

The front-end benefits immensely from an API-first approach. It can consume well-defined APIs without needing to know the backend’s internal structure. For complex UIs, you might even consider a “Backend for Frontend” (BFF) pattern, where a dedicated API gateway or service aggregates data from multiple microservices specifically for a particular client (e.g., a mobile app vs. a web app).